Log-Euclidean Kernels for Sparse Representation

and Dictionary Learning

Peihua Li1 and Qilong Wang2 and Wangmeng Zuo3,4 and Lei Zhang4

1 Department of Information and Communication Engineering, Dalian University of Technology

2 Department of Computer Science, Heilongjiang University

3 Department of Computer Science, Harbin Institute of Technology

4 Department of Computing, The Hong Kong Polytechnic University

|

Abstract:

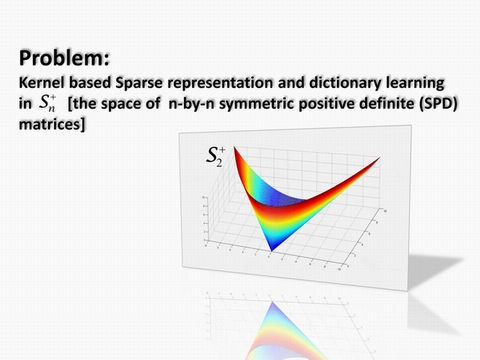

The symmetric positive definite (SPD) matrices have been widely used in image and vision problems. Recently there are growing interests in studying sparse representation (SR) of SPD matrices, motivated by the great success of SR for vector data. Though the space of SPD matrices is well-known to form a Lie group that is a Riemannian manifold, existing work fails to take full advantage of its geometric structure. This paper attempts to tackle this problem by proposing a kernel based method for SR and dictionary learning (DL) of SPD matrices. We disclose that the space of SPD matrices, with the operations of logarithmic multiplication and scalar logarithmic multiplication defined in the Log-Euclidean framework, is a complete inner product space. We can thus develop a broad family of kernels that satisfies Mercer's condition. These kernels characterize the geodesic distance and can be computed efficiently. We also consider the geometric structure in the DL process by updating atom matrices in the Riemannian space instead of in the Euclidean space. The proposed method is evaluated with various vision problems and shows notable performance gains over state-of-the-arts.

|

|

|

Peihua Li and Qilong Wang and

Wangmeng Zuo and Lei Zhang. Log-Euclidean Kernels

for Sparse Representation and Dictionary Learning. In: IEEE

International Conference on Computer Vision (ICCV), 2013.[PDF] [BibTex]

[Poster]

[Spotlight] |

|

Demo Matlab Code:

Face recognition using

Log-Euclidean Kernel for SPD matrices. DOWNLOAD |

|