Fast Compressive Tracking

Kaihua Zhang1, Lei Zhang1, Ming-Hsuan Yang2

1Dept. of Computing, The Hong Kong Polytechnic University, Hong Kong

2Electrical Engineering and Computer Science, University of California at Merced, United States

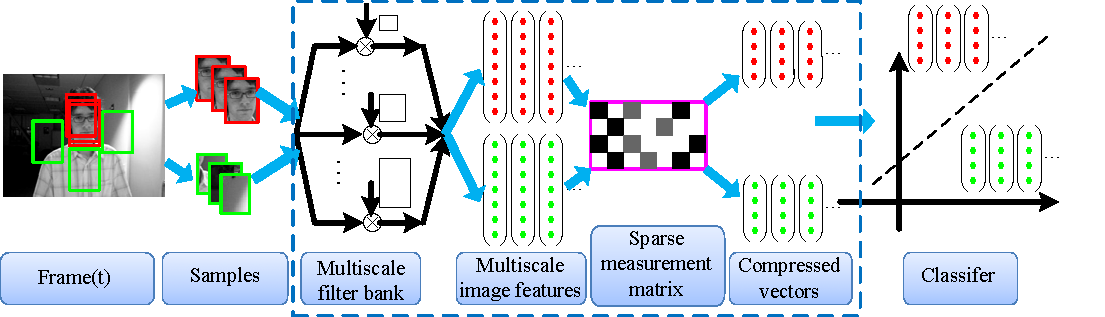

(a) Updating classifier at the t-th frame

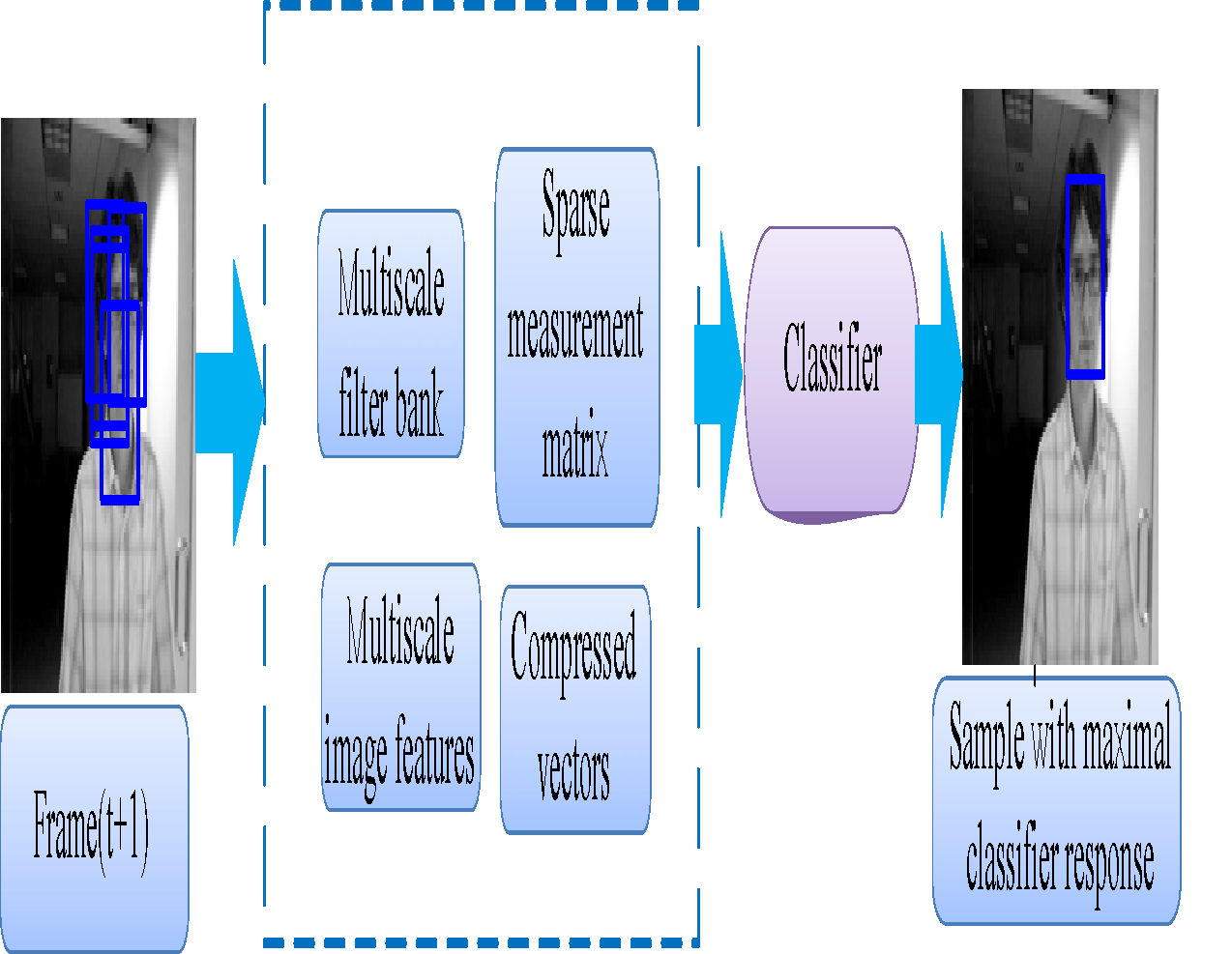

(b) Tracking at the (t+1)-th frame

ABSTRACT

It is a challenging task to develop effective and efficient appearance models for robust object tracking due to factors such as pose variation, illumination change, occlusion, and motion blur. Existing online tracking algorithms often update models with samples from observations in recent frames. While much success has been demonstrated, several issues remain to be addressed. First, while these adaptive appearance models are data-dependent, there does not exist sufficient amount of data for online algorithms to learn at the outset. Second, online tracking algorithms often encounter the drift problems. As a result of self-taught learning, these misaligned samples are likely to be added and degrade the appearance models. In this paper, we propose a simple yet effective and efficient tracking algorithm with an appearance model based on features extracted from the multi-scale image feature space with data-independent basis. Our appearance model employs non-adaptive random projections that preserve the structure of the image feature space of objects. A very sparse measurement matrix is adopted to efficiently extract the features for the appearance model. We compress samples of foreground targets and the background using the same sparse measurement matrix. The tracking task is formulated as a binary classification via a naive Bayes classifier with online update in the compressed domain. A coarse-to-fine search strategy is adopted to further reduce the computational complexity in the detection procedure. The proposed compressive tracking algorithm runs in real-time and performs favorably against state-of-the-art algorithms on challenging sequences in terms of efficiency, accuracy and robustness.

PAPER

Real-Time Compressive Tracking. Kaihua Zhang, Lei Zhang, Ming-Hsuan Yang. ECCV 2012.

[website]

Fast Compressive Tracking, submitted.

SOURCE CODES

DATA

Six our own used sequences can be downloaded from the following linkages:

Biker [Download data(zip)] Bolt [Download data (zip)] Shaking2 [Download data (zip)]

Chasing [Download data (zip)] Goat [Download data (zip)] Pedestrian [Download data (zip)]

Some other datasets used in our paper can be downloaded from the following websites:

http://vision.ucsd.edu/~bbabenko/project_miltrack.shtml

http://cv.snu.ac.kr/research/~vtd/

http://gpu4vision.icg.tugraz.at/index.php?content=subsites/prost/prost.php

TRACKING RESULTS

If you have any questions, please contact

Kaihua Zhang, zhkhua at gmail dot com

Kaihua Zhang Last Updated: 2013-01-31